HRV4Training

|

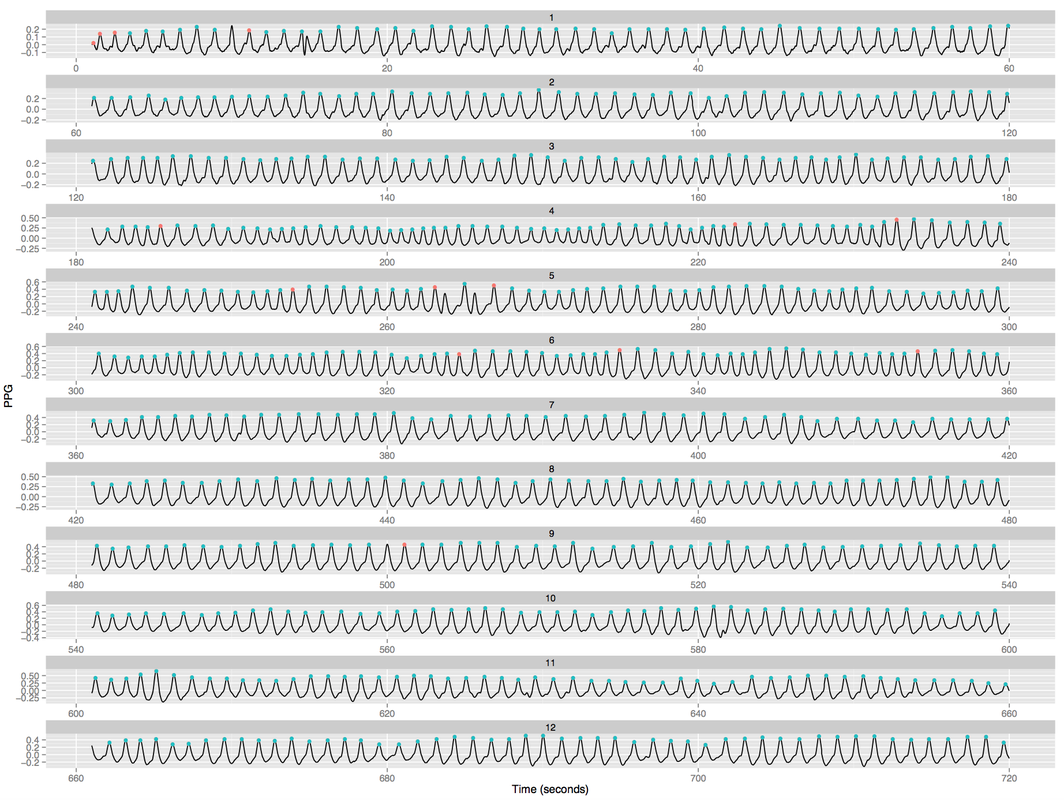

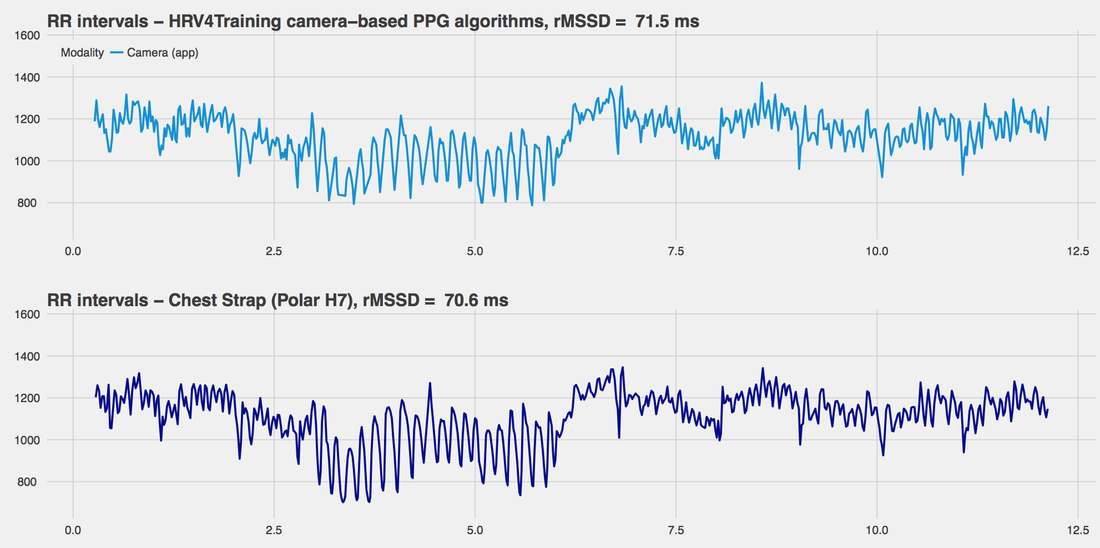

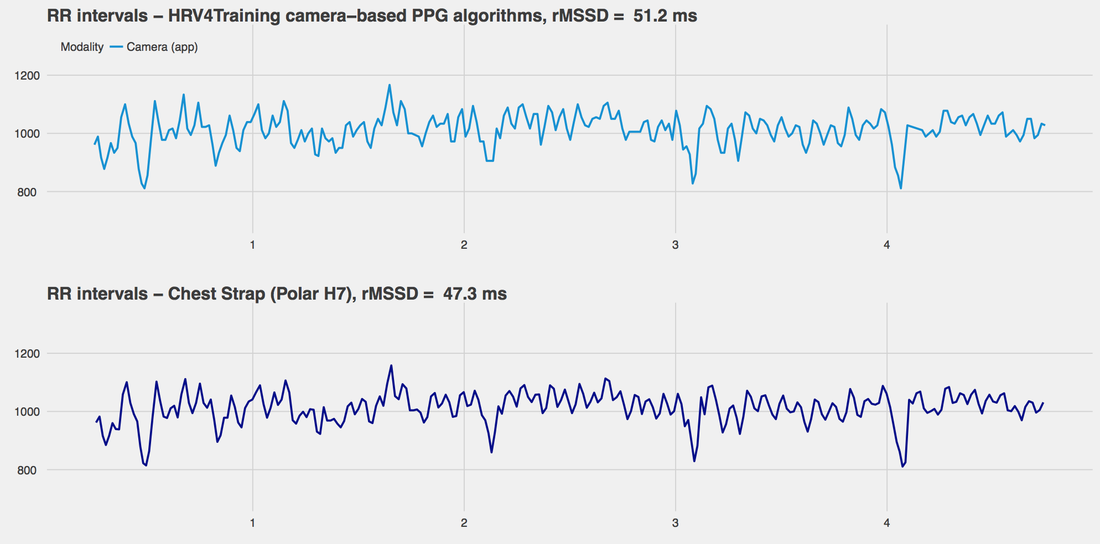

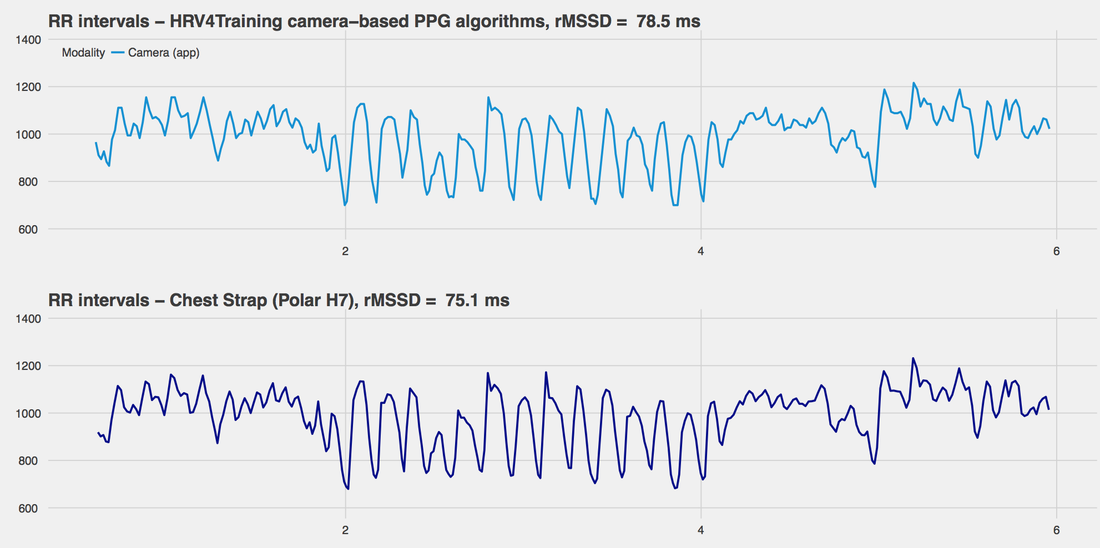

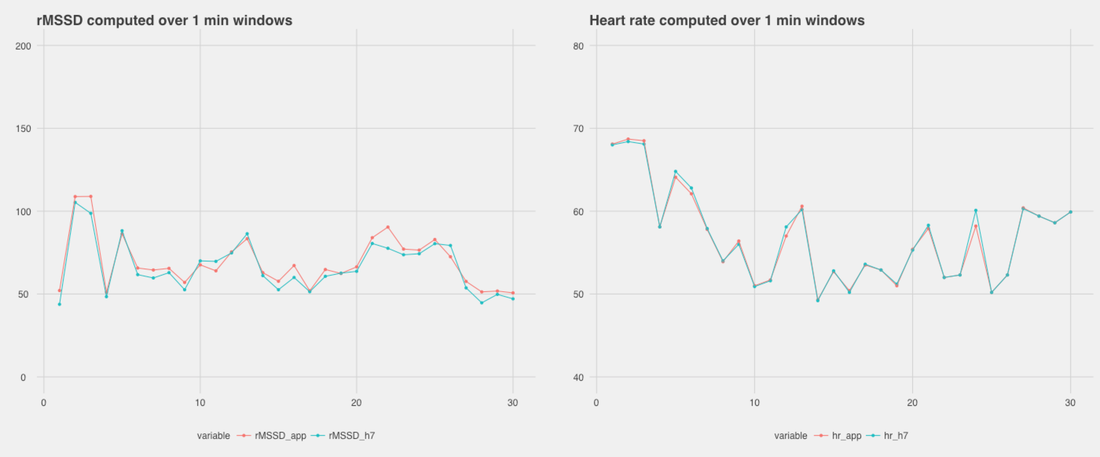

This post is a quick overview of the changes implemented in HRV4Training to support the latest iPhones. While the iPhone 7 required no changes in our algorithms, the 7+ is equipped with two cameras, and a few users reported having trouble getting a stable reading. We finally received our testing devices this week, and implemented the necessary changes to support the iPhone 7+. As some of you reported, two main issues were present; 1) heart rate was higher than it should have been 2) the phone was using the "wrong" camera, the one quite far from the flash, making it difficult to get a reading at all. The good news is that all issues have been fixed, and an update will be available in 1-2 weeks to extend support of the camera based algorithms for the iPhone 7+ as well. We would like to thank everyone for your patience and feedback as this input was very useful in quickly identifying the main sources of trouble, and fixing them. First, we switched the camera used by the app, as shown below: By using the camera closest to the flash, we ensure proper lighting which is necessary to capture changes in skin color due to blood flow. You can't really get it wrong, as covering the other camera will be obvious in the camera view in the app. Secondly, we had to make some additional adjustments to ensure that the sampling frequency and interpolation were working as in previous versions. Once we were able to make these adjustments, we ran a preliminary validation using our trusted Polar H7, and a prototype app we developed for our clinical studies. This special app can collect data from both the camera and the bluetooth sensor (the same app described here). Data cannot be perfectly synchronized because it is not timestamped by the bluetooth sensors, however we can log time and then split data in windows based on when it was collected, then compute HRV features based on RR intervals included in these windows. With this procedure we are typically off by one beat maximum, hence a small variation over a minute of data. First, let's look at an example of the PPG data collected using the app (12 minutes of data), where you can also see discarded beats in red, these ones are typically due to an initial stabilization phase and artifacts found during the measurements, for example intervals that are too close or too far apart and might be due to noise, ectopic beats, and so: After collecting accurate PPG, we need to extract peak to peak intervals, basically our RR intervals. Below we show the usual time series, for segments of data collected under different conditions (rest, deep breathing, etc.) - you can see how the RR intervals match very well the ones from the Polar sensor, the figures also report the HRV value (rMSSD in ms) for the entire segment. The first segment is the one that comes from the PPG data above: You can easily spot the deep breathing segment above, where oscillations due to RSA are quite obvious (minute 3 to 6). Here is another segment for self-paced breathing (just lying down relaxing): And finally another one also containing deep breathing: The data above shows quite clearly how RR intervals can be extracted accurately using the iPhone 7+ as well. Below you can see rMSSD and heart rate data for 1 minute windows, as this is our main use case in HRV4Training: Data shown in this post comes from one person only, and we will be collecting more to cover a broader range of HR and rMSSD values in the incoming weeks.

However, the main point here was simply to find (and fix) the issues that came up due to the double camera present in the latest iPhone 7+, and we believe these issues have been addressed. Hence, we will be releasing an update in the next 1-2 weeks so that you can start using the app with the iPhone 7+ as well (updates will come for both HRV4Training and Camera HRV).

0 Comments

Your comment will be posted after it is approved.

Leave a Reply. |

Register to the mailing list

and try the HRV4Training app! |